Amazon Web Services

2006: Amazon launched Amazon Web Service (AWS) on a utility computing basis although the initial released dated back to July 2002.

Amazon Web Services (AWS) is a collection of remote computing services (also called web services) that together make up a cloud computing platform, offered over the Internet by Amazon.com.

The most central and well-known of these services are Amazon EC2 (Elastic Compute Cloud )and Amazon S3 (Simple Storage Service).

Book:

Amazon Web Services is based on SOA standards, including HTTP, REST, and SOAP transfer protocols, open source and commercial operating systems, application servers, and browser-based access.

Topics:

1. Amazon S3

3. Amazon EBS

4. Amazon EFS (preview)

1). Amazon S3

· Amazon S3 is storage for the Internet.

· Amazon S3 has a simple web services interface that you can use to store and retrieve any amount of data at any time, from anywhere on the web.

· You can accomplish these tasks using the simple and intuitive web interface of the AWS Management Console.

S3 Concepts:

Buckets

· Amazon S3 stores data as objects within buckets. An object consists of a file and optionally any metadata that describes that file.

· Every object is contained in a bucket. For example, if the object named photos/puppy.jpg is stored in the johnsmith bucket, then it is addressable using the URL http://johnsmith.s3.amazonaws.com/photos/puppy.jpg

· When you upload a file, you can set permissions on the object as well as any metadata.

· Buckets are the containers for objects. You can have one or more buckets.

You can configure buckets so that they are created in a specific Region.

You can also configure a bucket so that every time an object is added to it, Amazon S3 generates a Unique Version ID and assigns it to the object

Objects

· Objects are the fundamental entities stored in Amazon S3. Objects consist of object data and metadata.

· The data portion is opaque to Amazon S3. The metadata is a set of name-value pairs that describe the object.

· These include some default metadata, such as the date last modified, and standard HTTP metadata, such as Content-Type.

· You can also specify custom metadata at the time the object is stored.

· An object is uniquely identified within a bucket by a key (Name) and a Version ID.

Keys

· A key is the unique identifier for an object within a bucket. Every object in a bucket has exactly one key.

· Because the combination of a bucket, key, and version ID uniquely identify each object, Amazon S3 can

· be thought of as a basic data map between "bucket + key + version" and the object itself.

· Every object in Amazon S3 can be uniquely addressed through the combination of the web service endpoint, bucket name, key, and optionally, a version

Regions

· You can choose the geographical region where Amazon S3 will store the buckets you create.

· You might choose a region to optimize latency, minimize costs, or address regulatory requirements

· Objects stored in a region never leave the region unless you explicitly transfer them to another region

2). Amazon CloudFront

· Amazon CloudFront is a web service that speeds up distribution of your static and dynamic web content, for example, .html, .css, .php, image, and media files, to end users.

· CloudFront delivers your content through a worldwide network of Edge Locations.

· When an end user requests content that you're serving with CloudFront, the user is routed to the edge location that provides the lowest latency, so content is delivered with the best possible performance.

· If the content is already in that edge location, CloudFront delivers it immediately. If the content is not currently in that edge location, CloudFront retrieves it from an Amazon S3 bucket or an HTTP server (for example, a web server) that you have identified as the source for the definitive version of your content.

3). Amazon Elastic Block Store (Amazon EBS)

· Amazon EBS provides Block Level Storage Volumes for use with EC2 instances.

· EBS volumes are highly available and reliable storage volumes that can be attached to any running instance that is in the same Availability Zone.

· EBS volumes that are attached to an EC2 instance are exposed as storage volumes that persist independently from the life of the instance.

· With Amazon EBS, you pay only for what you use.

· Amazon EBS is recommended when data changes frequently and requires long-term persistence.

· EBS volumes are particularly well-suited for use as the primary storage for file systems, databases, or for any applications that require fine granular updates and access to raw, unformatted, block-level storage. Amazon EBS is particularly helpful for database-style applications that frequently encounter many random reads and writes across the data set.

· For simplified data encryption, you can launch your EBS volumes as encrypted volumes. Amazon EBS encryption offers you a simple encryption solution for your EBS volumes without the need for you to build, manage, and secure your own key management infrastructure. When you create an encrypted EBS volume and attach it to a supported instance type, data stored at rest on the volume, disk I/O, and snapshots created from the volume are all encrypted. The encryption occurs on the servers that hosts EC2 instances, providing encryption of data-in-transit from EC2 instances to EBS storage.

· Amazon EBS encryption uses AWS Key Management Service (AWS KMS) master keys when creating encrypted volumes and any snapshots created from your encrypted volumes. The first time you create an encrypted EBS volume in a region, a default master key is created for you automatically. This key is used for Amazon EBS encryption unless you select a Customer Master Key (CMK) that you created separately using the AWS Key Management Service. Creating your own CMK gives you more flexibility, including the ability to create, rotate, disable, define access controls, and audit the encryption keys used to protect your data.

· You can attach multiple volumes to the same instance within the limits specified by your AWS account. Your account has a limit on the number of EBS volumes that you can use, and the total storage available to you.

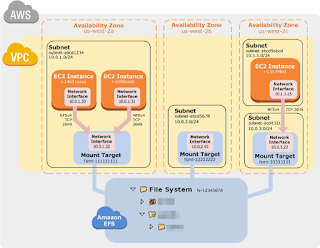

4). Amazon Elastic File System (Amazon EFS)

· Amazon EFS provides File Storage for your EC2 instances.

· With Amazon EFS, you can create a file system, mount the file system on your EC2 instances, and then read and write data from your EC2 instances to and from your file system.

· With Amazon EFS, storage capacity is elastic, growing and shrinking automatically as you add and remove files, so your applications have the storage they need, when they need it.

· Multiple Amazon EC2 instances can access an Amazon EFS file system at the same time, providing a common data source for workloads and applications running on more than one instance.

· The service is designed to be highly scalable. Amazon EFS file systems can grow to petabyte scale, drive high levels of throughput, and support thousands of concurrent NFS connections

· Amazon EFS stores data and metadata across multiple Availability Zones in a region, providing high availability and durability.

· Amazon EFS provides read-after-write consistency.

· Amazon EFS is SSD-based and is designed to deliver low latencies for file operations. In addition, the service is designed to provide high-throughput read and write operations, and can support highly parallel workloads, efficiently handling parallel operations on the same file system from many different instances.

Note: Amazon EFS supports the NFSv4.0 protocol. The native Microsoft Windows Server 2012 and Microsoft Windows Server 2008 NFS client supports NFSv2.0 and NFSv3.0

· Amazon Glacier is a storage service optimized for infrequently used data, or "Cold Data." (If your application requires fast or frequent access to your data, consider using Amazon S3)

· The service provides durable and extremely low-cost storage with security features for data archiving and backup.

· With Amazon Glacier, you can store your data cost effectively for months, years, or even decades.

· Amazon Glacier enables you to offload the administrative burdens of operating and scaling storage to AWS, so you don't have to worry about capacity planning, hardware provisioning, data replication, hardware failure detection and recovery, or time-consuming hardware migrations.

· The Amazon Glacier data model core concepts include Vaults and Archives.

· Amazon Glacier is a REST-based web service. In terms of REST, vaults and archives are the resources.

· In addition, the Amazon Glacier data model includes Job and Notification-Configuration resources. These resources complement the core resources.

5). AWS Import/Export

· AWS Import/Export is a service that accelerates transferring large amounts of data into and out of AWS using physical storage appliances, bypassing the Internet.

· AWS Import/Export consists of

o AWS Import/Export Snowball (Snowball), which uses on demand, Amazon-provided secure storage appliances to physically transport terabytes to many petabytes of data, and

o AWS Import/Export Disk, which utilizes customer-provided portable devices to transfer smaller datasets.

· AWS transfers data directly onto and off of your storage devices using Amazon’s high-speed internal network.

· Your data load typically begins the next business day after your storage device arrives at AWS. After the data export or import completes, we return your storage device.

· For large data sets, AWS Import/Export can be significantly faster than Internet transfer and more cost effective than upgrading your connectivity.

· AWS Import/Export supports:

o Import/Export to/from Amazon S3

o Import to Amazon EBS

o Import to Amazon Glacier

· AWS Storage Gateway connects an on-premises software appliance with cloud-based storage to provide seamless integration (with data security features) between your on-premises IT environment and the Amazon Web Services (AWS) storage infrastructure.

· You can use the service to store data in the AWS cloud for scalable and cost-effective storage that helps maintain data security.

· AWS Storage Gateway offers both Volume-Based and Tape-Based storage solutions

· You may choose to run AWS Storage Gateway either

o On-premises as a virtual machine (VM) appliance, or

o In AWS, as an EC2 instance.

· You deploy your gateway on an EC2 instance to provision iSCSI storage volumes in AWS.

· Gateways hosted on EC2 instances can be used for disaster recovery, data mirroring, and providing storage for applications hosted on Amazon EC2.

Regards,

Arun Manglick

No comments:

Post a Comment